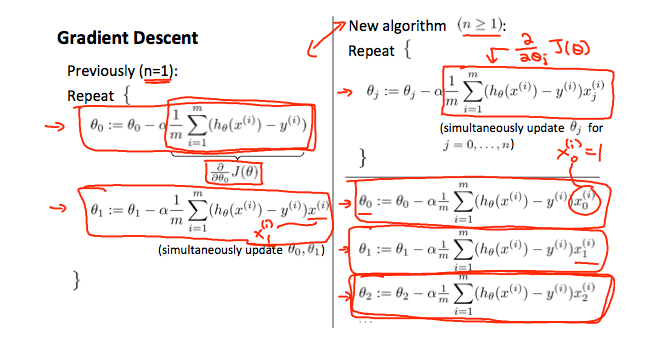

Gradient Descent For Multiple Variables Octave. Oct 18 2016 Gradient Descent for Linear Regression. One variable in.

Keeping track of the cost function costHistoryi costx y parameters. Feature scaling allows you to reach the global minimum faster. First question the way i know to solve the gradient descent theta 0 and theta 1 should have different approach to get value as follow.

Ex1data2txt - Dataset for linear regression with multiple variables.

Oct 18 2016 Gradient Descent for Linear Regression. In Octave you can multiply xji to all the predictions using so it can be written as. Is as follows if you encounter something in the form of SUM_i fx_i y_i ga_i b_i then you can easily Im doing Andrew Ngs course on Machine Learning and Im trying to wrap my head around the vectorised implementation of gradient. Xi_j for j 12n Visualize the Data.